Making a local web server public with localtunnel

May 11, 2010

These days it’s fairly common to run a local environment for web development. Whether you’re running Apache, Mongrel, or the App Engine SDK, we’re all starting to see the benefits of having a production-like environment right there on your laptop so you can iteratively code and debug your app without deploying live, or even needing the Internet.

However, with the growing popularity of callbacks and webhooks, you can only really debug if your script is live and on the Internet. There are also other cases where you need to make what are normally private and/or local web servers public, such as various kinds of testing or quick public demos. Demos are a surprisingly common case, especially for multi-user systems (“Man, I wish I could have you join this chat room app I’m working on, but it’s only running on my laptop”).

The solution is obvious, right? SSH remote forwarding, or reverse tunneling. Use a magical set of options with SSH with a public server you have SSH access to, and set up a tunnel from that machine to your local machine. When people connect to a port on your public machine, it gets forwarded to a local port on your machine, looking as if that port was on a public IP.

The idea is great, but it’s a hassle to set up. You need to make sure sshd is set up properly in order to make a public tunnel on the remote machine, or you need to set up two tunnels, one from your machine to a private port on the remote machine, and then another on the remote machine from a public port to the private port (that forwards to your machine).

In short, it’s too much of a hassle to consider it a quick and easy option. Here is the quick and easy option:

$ localtunnel 8080

And you’re done! With localtunnel, it’s so simple to set this up, it’s almost fun to do. What’s more is that the publicly accessible URL has a nice hostname and uses port 80, no matter what port its on locally. And it tells you what this URL is when you start localtunnel:

$ localtunnel 8080

Port 8080 is now publicly accessible from http://8bv2.localtunnel.com …

What’s going on behind the scenes is a web server component running on localtunnel.com. It serves two purposes: a virtual host reverse proxy to the port forward, and a tunnel register API (try going to http://open.localtunnel.com). This simple API allocates a port to tunnel on, and gives the localtunnel client command the information it needs to set up an SSH tunnel for you. The localtunnel command just wraps an SSH library and does this register call.

Of course, there’s also the authentication part. As a free, public service, we don’t want to just give everybody SSH access to this machine (as it may seem). The user localtunnel on that box is made just for this service. It has no shell. It only has a home directory with an authorized_keys file. We require you to upload a public key for authentication, and we also mark that key with options that say you can only do port forwarding. Although, it can’t be used for arbitrary port forwarding… because it’s only a private port on the remote side, it can only be used with the special reverse proxy.

So there it is. And the code is on GitHub. You might notice the server is in Python and the client in Ruby. Why? It just made sense. Python has Twisted, which I like for server stuff. And Ruby is great for command line scripts, and has a nice SSH library. In the end, it doesn’t matter what it’s written in. Ultimately it’s a Unix program.

Enjoy!

Notify.io brings notifications to the web

January 26, 2010

In October 2009 I started a project called Notify.io and a month later announced it. I talked about how it will bring notifications to the web. Now that it’s basically alpha complete, I’ll give you a quick walkthrough of what makes it so great.

Overview

At a really high level, you can think of Notify.io as a notification router. As a web service, it provides a singleton endpoint for any web-connected program, whether a web application, desktop application or user script, to send notifications to somebody. For users, you can control what notifications you get and how you get them. In this way, Notify.io is like a global, web-accessable version of the popular Growl application for OS X (which should honestly just ship with OS X). Only it’s even better.

Desktop Notifications

The original inspiration for Notify.io was to make Growl more useful by fixing its ability to receive notifications from the Internet. Out of the box, Growl is effectively only good for notifications from sources running on your machine. If you wanted to get notifications from a web app, you’d have to wait for them to release a desktop notifier, which hopefully would use Growl to actually display the notifications. So you end up with all these desktop notifiers running for some apps, and have no option of desktop notifications for others.

This is probably the killer feature of Notify.io: it lets you get desktop notifications from any web app that supports it, which is an order of magnatude easier for them to do than build their own desktop notifier.

Sources and Outlets

The language of Notify.io is based around Sources and Outlets. Sources are pretty straightforward. They’re a source of notifications. They could represent an application, script, company, person (or perhaps object?) that can send you notifications.

Outlets repesent the other major feature of Notify.io. They’re ways you can get a notification. The Desktop Notifier is your first and default outlet, but is just one of several options. Currently supported Outlets besides Desktop Notifier are Email, Jabber IM, and Webhooks. Outlets to look forward to are SMS, Twitter, IRC, and perhaps telephone.

The magic is in routing notifications from Sources to Outlets. Currently this is a simple mapping of Source to Outlet. For example, you can get notifications from Source A on your desktop, while notifications from Source B go to IM. This simplistic routing is just the beginning. We’ll talk about how we’ll do advanced routing when we get to the Roadmap.

The Nio Client

For developers, it’s worth mentioning that the pipe for our Desktop Notifier is really just a Comet HTTP stream. It can be consumed by pretty much anything. We were originally talking with Growl and authors of other desktop notifiers of direct integration. This is still a possibility, but just so we could move forward, we built our own client for OS X. Clients for other systems are available (but not yet “officially” supported) or are in progress, including Windows and Android.

Our OS X client is called Nio, short for Notify.io, so you can pronounce it N-I-O, but I tend to pronounce it “neo”. It’s basically just an application that sits in your menu bar listening to HTTP streams (yes, plural) for notifications and pipes them into Growl.

For ease of installing streams, we made it handle files of the extension ListenURL. Once Nio is installed, you can download a ListenURL file containing a URL and it gets installed by Nio. The URL we give you is basically a “capability URL” or secret URL. This means streams are not super secure, but this is by design. If you wanted, you could share your URL with somebody so you both get notifications sent to that Outlet. You can always delete the Outlet and make another to disable that URL.

The other cool thing about our client is that it has a shell script notification hook. This means you can have notifications trigger a shell script that’s passed the notification details. This is pretty powerful because it means you can do things like create your own local logging, hear your notifications with text-to-speech, or make certain notifications trigger a more obstrusive means of notifying you, such as Quicksilver’s Large Type feature. This kind of programmability is central to our approach to design, as you’ll see later on in the Roadmap.

Simple API and Approval Model

For proper adoption, we need web apps to integrate Notify.io, so we have a super simple API for Sources. It’s a simple REST API based on an endpoint constructed by the target of your notification. Like Gravatar, we use an MD5 hash of a user’s email address to identify targets. For example, to send a notification to test@example.com, you’d do an HTTP POST to this URL:

http://api.notify.io/v1/notify/55502f40dc8b7c769880b10874abc9d0

You’d pass a few parameters, with at least your API key (meaning you need an account) and the text you want to send, and optionally an icon URL, link URL, title text and whether the notification should be “sticky”. That’s it. The request should respond immediately so it may be quick enough to be done inline in your app, but we recommend it be done asynchronously.

Then what happens is the first notification you send actually triggers a notification to that user that you want to send them notifications. If they accept, future notifications will be sent and your previous notifications will show up in their history. This may change to replay previous notifications on approval, but the point here is the user has to approve notifications before they get them. In this way, it’s similar to Jabber’s approval model and helps avoid spammers.

Public Service Software

Notify.io and its clients are open source. The service is free. Or rather, it’s not-for-profit donationware. Notify.io is being run under a model I’m developing called POSS, the goal of which is to automate/abstract away the maintainence and funding of its operation. The end result should be: the service exists, it’s open source, and some in the developer community can deploy changes. But no single person is financially responsible for it, and it’s run on maintained cloud infrastructure. In this case it’s mostly App Engine.

This means that Notify.io is not a startup. It’s public infrastructure. Ideally, I’m not even in the loop. It should be a self-sustaining public service. This is not fully realized, but it will be as it starts to consume more resources. For more information, you can read more on POSS or join our discussion group.

For now, the important thing is that Notify.io is open source. This means anybody can contribute bug fixes, new outlets, new desktop clients, etc.

Roadmap

Okay, sure, Notify.io is pretty cool now. But here are some of the major things that will be coming soon. Hopefully with your help!

Advanced Routing and Filters

From the beginning, I wanted really powerful routing and filtering. My evangelism of webhooks has given me the obvious answer to this, but in a more integrated way. Basically, how do you allow any routing scheme imagineable by users? Let them write code. Originally it was going to be powered by Scriptlets, but since I split the eval engine out as DrEval, it will be based on that.

Basically, just a imagine a UI with a little textarea for writing JavaScript that can make web calls. Route notifications based on your IM status, your location, what music you’re listening to, arbitrary time schedules, or anything you can code.

More Outlets

Obviously, more Outlets are good. Obvious ones are IRC, SMS, and Twitter DM. With Twilio we can do voice call notifications. Integration with push clients like the iPhone’s Prowl app would be easy to do. Our outlet system is very simple, so you can look at the source of our existing ones, write an outlet and it’s likely we’ll deploy it.

OpenID Support

Right now, you authenticate with Google. I don’t believe in creating authentication systems, and Google was the quickest given the platform. It’s also pretty popular and ensures you have an email address we can use. However, there are plenty of people that don’t like the idea of using their Google Account, so at some point we’ll support OpenID login and then go from there.

Multiple Email Support

Ideally, a web app can use whatever email address you used to register with them to send you notifications. However, unless Gmail is your primary email you use for registration, they’ll still need to ask you for your email. It’s the Gravatar model. So like Gravatar, we’ll need to let you add multiple emails to your account, allowing web applications to be able to send notifications based on any of them.

Convenience Libraries

Our API is simple, but people are lazy. We’re currently working on convenience libraries for popular langauges that it make that much easier to integrate with Notify.io. If you use a neat language, you should make a libnio package for it!

Ad-hoc Sources

Sources require an account, which is a bit heavyweight. Sometimes you want to create your own distinct sources to share with others or use in your scripts to easily send yourself notifications. This is the idea of Ad-hoc Sources, inspired by David Reid and capability URLs. The idea is simple: create an ad-hoc source and you get a secret URL. This URL acts just like the notify API endpoint, only you don’t need an API key. You can use this in public scripts or give it to others to send you notifications, and if it’s ever abused or falls in the wrong hands, just delete it and make another.

More Supported Clients

A developer in Japan started a Windows client based on Nio that we’re planning to support as our primary Windows client. Another developer is working on an Android client. iPhone users have Prowl, so once there is a Prowl outlet, you can get them on your iPhone. But Prowl is not free, so perhaps it would be helpful if we had our own iPhone client. There are the beginnings of a Linux/libnotify client. These are all ways you can start contributing to Notify.io. ;)

That’s about it. You can probably see why I describe Notify.io as the open notification platform of the web. It’s simple, powerful, and open source. It’s come a long way in just 3 months thanks to the contributions of Abimanyu Raja, Amanda Wixted, Mike Lundy, David Reid, Christopher Lobay, Hunter Gillane, Nakamatsu Shinji, and everybody that’s given user feedback so far. I recently made a quick screencast for the homepage that I’ll end with so you can see it in action.

Natural language time parsing and more with TimeAPI.org

December 9, 2009

In my post about stdicon.com, I hinted at something else I built in collaboration with a few people that started from a Twitter update. I’m just now writing about it, but I’ve actually built a lot of things I haven’t blogged about yet. Granted, they’re all an artifact of my strange world, but I figure if I need them more than once, there’s a decent chance somebody else on this planet will need them someday. Anyway…

I present to you: TimeAPI.org

It started from wanting Chronic as a Service—a natural language datetime parser written in Ruby. You know, “in five hours” or “noon next tuesday.” Sure, there are good JS libraries, but actually, it’s a backend thing for me (webhooks). Unfortunately, I don’t use or have Ruby available all the time, so as part of my effort to make lots of tiny useful web services, I decided to make it a web service. Or rather, I decided somebody should.

Half an hour after I tweeted it, somebody had a prototype deployed. Then I worked with a friend to functionally polish it up. I’ve been meaning to use it for a simple Tweet-later service, but my first use of it was in Remindify, which was fairly recent (and is something I still need to blog about). In the process, I fixed a few bugs regarding timezones and became thoroughly frustrated with datetime programming. Haven’t we all.

However, the last thing I added was support for format strings. Why? Well, it crossed my mind before, since it would help in environments that have a hard time parsing ISO 8601 formatted datetimes (GLARES FURIOUSLY AT PYTHON). What finally made me implement it was so that I could use TimeAPI with the date command as a sort of cheap ntpdate replacement. Yes, this works:

date -s "$(curl "http://www.timeapi.org/utc/now?\a \b \d \I:\M:\S \Z \Y")"

For convenience, you could use this shortened URL:

date -s "$(curl -L http://j.mp/now-utc)"

Anyway, that’s kind of neat. Obviously, it’s more useful for parsing natural language. It’s a bit dumb when it doesn’t understand your natural language queries (throws up 500), but if you know how to use it and constrain it properly in your app, you’ll be fine.

Somebody else suggested adding the reverse functionality, sort of a time_ago as a service. Not a priority for me, but if anybody wants to add it, this is a completely open source service and I do deploy patches.

Solving Comet to the browser with CometCatchr

November 30, 2009

I’ve been playing a lot with Comet lately. It started with Notify.io, in which I decided to prove that HTTP streaming was a simpler alternative to XMPP in getting messages to the desktop. That went quite well, but it was easy because it wasn’t all that different from a socket connection. Then I built a yet-to-be-announced site that uses real-time updates, and I was forced to deal with Comet to the browser. That’s a bit more complicated.

Actually, I’m arguably a veteran of Comet in the browser. I was doing it before it had a name, all the way back in 2005. A friend and I were using it (without knowing that it was terribly novel) to build a real-time strategy game in the browser called AjaxWar. I haven’t really done a lot with it since, but I was hoping after almost 5 years there would be all kinds of advances in libraries and tricks that would make it super easy.

That was not really the case.

There are things, but not easy things. The Bayeux protocol? I guess all that would be easy to do if there were a lightweight Javascript library for it. But there isn’t really. There’s a jQuery one, but it’s completely undocumented. Plus I was just sitting there thinking, do I need all this? Handshaking? Message envelopes?

I was also hoping for actual persistent connections (that’s what we did in AjaxWar), but it turns out the standard today is long-polling. This is a semi-persistent connection that drops after every message and then reconnects and waits for the next message. I also wanted JSONP and cross-domain support. So I ended up using the dynamic script tag technique:

<script type="text/javascript">

$(document).ready(function(){ waitForMsg(); });

function waitForMsg() {

$('body').append('\<script type="text/javascript" src="http://mycometserver.com/channel?callback=gotMsg">\<\/script>');

}

function gotMsg(msg) {

// Do something with it

waitForMsg();

}

</script>

It’s fairly elegant in its simplicity and cross-browser support. But it has some weird side-effects that I’m not sure if any of the fancier systems got around. For one, it keeps the browser loading. While it’s waiting for messages, the browser says that page is still loading. I also ran into some issues where if I included, say, a Google Calendar widget on the page, it might not decide to keep the connection open (or even start it). I ended up putting a delay on the first call to waitForMsg() until after the calendar widget was likely loaded.

So it’s a bit brittle. You don’t know if it stops working. Therefore you can’t do retries. And you never know if you happen to miss a message between connections (unlikely, but something to worry about). Plus I think if you hit Escape it also kills it.

But this worked well enough for my projects. I knew that if I found a better way, I’d switch over to it, but it was good enough.

…heh…

Then today I decided to solve the problem right once and for all with a project called CometCatchr.

CometCatchr a lightweight Flash component to be used by Javascript that gives you a persistent connection for Comet streams.

I know a very small number of people won’t agree with my approach using Flash, but I tend to be pragmatic. I trust Flash is generally available and the benefits completely outweigh everything else to me. It’s also not new. Even the Bayeux protocol includes Flash as supported connection type. Still, I couldn’t find any simple Flash component that gave me what I wanted.

CometCatchr gives me Javascript callbacks on messages, maintains a persistent connection across messages, retries on lost connections, works in all browsers, supports (participating) cross-domain message sources, and just freaking works.

It was a drop-in replacement to my previous technique that worked right out of the box:

<script type="text/javascript">

function gotMsg(msg) {

// Do something with it

}

</script>

<embed type="application/x-shockwave-flash" width="0" height="0" src="CometCatchr.swf?url=http://mycometserver.com/channel&callback=gotMsg"></embed>

It simplified my code, not just client-side, but now I don’t have to support JSONP callbacks on the server. CometCatchr also parses the JSON messages before passing the callback, so that’s taken care of too. I realize it seems less than ideal to couple this with JSON payloads, but I didn’t say it wasn’t an opinionated component.

In fact, it’s very opinionated. It likes single-line JSON messages sent via HTTP using chunked transfer encoding. That’s because that’s how I do Comet streams. Actually, that’s how Twitter does them, too. I’m quite alright with such constraints, but if you want to make changes, it’s MIT licensed and super simple to hack on.

So for the time being, I’ve more or less solved simple Comet to the browser, particularly for me. It also might be worth knowing that another motivation for building this component is that I intend to solve Comet and real-time stuff in the browser entirely… but uhh, yeah. Stay tuned.

Web notifications just got real with Notify.io

November 9, 2009

The “real-time web” is a popular topic right now. My WebHooks initiative is both riding on this success and helping make it a reality. One sector of this trend is about notifications. Real-time notifications to you about events you care about.

For a long time we’ve had helper apps like the Google Notifier and more recently the Facebook Desktop Notifications app that bring events from the web to your desktop. Twitter has created a whole ecosystem of clients that not only let you actively check Twitter, but passively get updates from Twitter.

Simultaneously, we’ve had a bunch of systems like Growl emerge that give you a consistent, well-designed and customizable system for local applications to give you notifications. While your IM client is in the background, it can tell you somebody IM’d you and what they said in an unobtrusive way. It integrates with email applications to tell you of new emails. It gives any application developer a nice way to present notifications to the user in a way that’s in their control.

Some of the apps that bring web applications to your desktop like Tweetie, Google Notifier, etc will integrate with Growl (which has a counterpart on pretty much every platform, including the iPhone). The problem is that you only get these notifications when the desktop apps are running, despite the fact web apps are always running. And yes, you have to have an app running for each web application you use.

And that’s only if they built a desktop app and you were convinced to download it. Most web applications are never be able to notify you with any means other than email. But as I’ve argued before, notifications don’t belong in your inbox!

Another minor point is that all these apps use polling to get updates. In some cases this doesn’t matter, but as data starts moving in real-time, this batches your notifications into bursts that you may not be able to parse all at once. I use Tweetie to get Growl notifications from Twitter at the moment, and if a lot of people are updating, I get a huge screen of updates that I don’t have time to read before they disappear. It becomes useless.

A while back I attempted to make an app called Yapper that lets anybody send real-time notifications to your desktop via XMPP. It was an experiment, and ultimately not the answer. It was only part of the solution.

But today I’m announcing the full solution: a free, public, open-source web service called Notify.io (Notify-I-O).

Notify.io integrates with Growl and other local notifiers (as well as email, Jabber, Twitter, and webhooks) and provides a dead-simple API for any web developer to send real-time notifications to their users.

You can think of Notify.io as a web-level Growl system. It empowers users with a consistent, controllable way to get notifications, and it provides developers with a simple, consistent way for sending those notifications.

Notify.io is an open platform for notifications. It’s still in a pre-alpha state, but it already has several useful notification sources. Last Thursday I built Feed Notifier, which uses PubSubHubbub to give you real-time desktop notifications of Atom and RSS feed updates.

At SHDH 35 last Saturday, Abi Raja built a Facebook notification adapter for Notify.io that’s yet to be released. And there a couple more in the pipeline (by me and others) to show the power of Notify.io.

Again, it’s pre-alpha, so before I talk much more about it, I should probably finish more of it. I just wanted to make sure I blogged about it in somewhat of a timely fashion. I seem to have a backlog of blog posts about apps I’ve built recently. However, Notify.io is a pretty significant one. Feel free to check it out, just remember that despite its looks, it’s nowhere near finished — but it does work.

Getting webhook callbacks when people click-through links

August 13, 2009

So I recently put this blog’s feed through FeedBurner. If I recall, I think I wanted to get subscriber statistics, as well as to try out their integration of PubSubHubbub publishing. But since I didn’t think I would get new subscribers often and I’m too lazy to check stats, I really wanted a way to be notified when people subscribed. Since the means of subscribing would be a link, it hit me: click-through webhooks.

I wanted a link wrapper that would trigger a URL callback (aka a webhook) when people clicked through the link. Similar to a URL shortener, you’d just give it a URL and it would give you another URL, but instead of being shorter, it would be tied to a callback that would run as it redirected the user to the original URL. What could you do with this, you ask? It’s a webhook; you can do anything with this event.

Coming back to my original use case, I’d probably tie it to a script that sent me an IM that somebody subscribed. Or better yet, with something like Yapper, I could get a Growl notification. Sweet, right?

I knew it would be trivial to build, and even though it was a feature that I really wanted, it was still just a feature. I thought, it really doesn’t need its own app, does it? So I went to my URL shortener of choice, tr.im, and suggested it as a feature. I also happened to mention to all my friends they should vote it up. Well, it ended up being the 6th most requested feature because of that. I thought for sure they’d implement it. I mean it was so much easier than the others, and I gave them code to help do it and everything.

A couple weeks went by and nothing happened. I got no response. I emailed them and got nothing back. They didn’t even mark it as “under review.” It may have had to do with the fact they were then deciding to shut down … which I hear they’ve changed their mind about. Nevertheless, it still hadn’t happened. And I really wanted to be able to do this notification on subscription thing!

Along came my WebHooks and PubSubHubbub meetup where I wanted to demo this bit of plumbing. Of course, I had no way to do it because nobody had implemented it. So I figured I’d just build it that day before the meetup. Twenty minutes later, I had it live: ClickHooks.

Okay, so that part’s done. How do I get it to IM me? Well, Scriptlets was made to write the glue code to use with these kinds of webhooks. And my project Protocol Droid was made to make it easy to use other protocols from HTTP. So I just threw this little baby on Scriptlets and I was done:

import urllib

payload = {

'username': 'demo@progrium.com',

'password': 'secret',

'to': 'progrium@gmail.com',

'body': "You got a new subscriber on blogrium!"

}

fetch("http://pdroid.progrium.com/xmpp:talk.google.com/send", urllib.urlencode(payload), "POST")

Yes, that pdroid endpoint is live. No, I don’t recommend using it for production. Stand up your own Protocol Droid gateway. ;)

Anyway, simple enough to understand though, right? It should be. And it was simple to get set up. That’s the whole point of a world of webhooks: you can easily do so much cool stuff if this simple infrastructure is there.

As an aside, later I realized I could generalize my IM scriptlet to use a GET param for the body of the message, allowing me to make a personal messaging micro-webservice: http://www.scriptlets.org/abcdef?body=Hello, world! The data in this URL could then be hidden behind a URL shortener, giving me a regular looking URL that I could then give to people that would IM me the message when they clicked it. Kind of silly, but I almost set up a link that would IM me “At the door!” that people could click from their phone browsers as a doorbell for the WebHooks meetup.

Ah, fun with infrastructure.

Yapper, a Jabber/XMPP interface for Growl

June 28, 2009

Update: I’ve basically stopped development and support of Yapper because Notify.io is the right way to solve this problem. However, this post describes that problem and is still worth the read. ;)

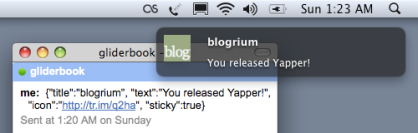

Today I released Yapper, a full featured Jabber/XMPP interface for Growl. It was based on the simple Twisted script I wrote while building my notification email to Growl piping. Although it’s limited to OS X users, I still think this is a big deal. Let me explain.

If you haven’t heard of Growl, it’s a global notification system that lets applications notify you of events in a consistent, customizable way. It’s been around for a while and is integrated or has plugins for lots of popular apps like iTunes, Adium, and Tweetie. It has bindings for a bunch of languages and also has a command line tool that you shell scripters can use to pipe notifications into. For example, I used this recently for my reminder system.

I think Growl is great particularly because it very nicely solves passive real-time notifications. That is, you want to be notified, but you don’t want it to interrupt you by requiring action. This is why I hate email notifications.

However, the major problem with Growl is that for the most part, it’s limited to local notifications. Sure, you can get notifications from “out there,” like Twitter or IRC or Gmail … but it requires you to have a local application that pushes them into Growl. One for each, in fact.

It turns out, I don’t actually care about most local notifications. Telling me a file is done downloading or what song is playing in iTunes is not terribly notification worthy. If I’m sitting there to get the notification, I probably already know. The most useful notifications are of events important to me that I’m nowhere near … events from things out on the Internet.

So Growl needs a network interface. Oh, wait! It has one! Well, what’s the problem? Two things: it’s a non-standard protocol, and it requires a direct connection. That not only raises the bar for things “out there” to notify you with Growl, but it literally makes it impossible if you’re constantly changing IPs, sitting behind a firewall or NAT, etc.

Let’s see… who’s solved this problem already? Right, IM! XMPP, the open standard for IM and real-time message passing (also known as Jabber), seems to have everything we need. It’s a popular protocol, fairly accessible from any language, and doesn’t require a direct point to point connection. So it’s a perfect transport for network Growl notifications!

Since I’m not one to wait around for people to implement what I think they should implement, I solved this problem by making Yapper. Yapper is a lightweight Jabber client made specifically to receive Growl notifications. It starts when your machine starts and sits in the background just waiting for whatever you have sent to it so it can pop it up with Growl.

You can now easily have Growl notifications sent to you from anywhere. Websites have less of a reason not to directly notify you with Growl for events that are important to you. More importantly for me, I can more easily set up Growl notifications for whatever I want. With the rise of webhooks, so can you. Anyway, to me this generally makes Growl much more useful.

Give Yapper a look. It’s on GitHub under MIT license. It’s the first release, but it should work fine. If not, drop off an issue and it’ll likely be taken care of.

Fun with Internet Plumbing

June 22, 2009

The other day I realized I hate notification emails. In particular I’m talking about SVN commit notifications. I have them set up to skip my inbox and filter into a label on GMail, but this doesn’t really help. They don’t pollute my inbox, but I still have to go out of the way to read them. Or at the very least, mark them as read, which is still quite annoying!

Growl is supposed to help solve this problem. In fact, when I was retooling the notification system at work, I would have loved to integrate Growl … except that Growl’s network interface requires a direct connection.

Taking a step back, it’s not just work notifications. A lot of us get a lot of notification emails about a lot of things. Most of them are poorly designed, for example, without important details of the notice in the subject. Plus, as email they require disrupting action. Not just to open and read if you have to, but just to archive and/or mark as read.

It’s great to have an archive in email, but email is just not ideal for notifications. If I’m around to receive them, I should get the important information passively and not have to do anything else until I need to reference it later, if ever. That means Growl.

Getting Notification Emails to Growl

I thought to myself, “I should be able to rig this up. And it should be easy.” And if the infrastructure and plumbing I’ve wanted was in place, it would be easy. So I decided to lay it down and make it happen. Here’s what I needed:

- An email to webhook bridge, like my old Mailhook service.

- An XMPP to Growl bridge. We all want this anyway, right?

- An HTTP to XMPP bridge. Why? You’ll see.

- Glue code that can run in the cloud. This is what Scriptlets is for.

I’ve since turned off Mailhook, but I’ve been planning to roll its code into my still early Protocol Droid project. This is an HTTP to anything bridge written in Python with Twisted that makes heavy use of webhooks. I’d plan to roll the HTTP to XMPP bridge in there as well. For now, the XMPP to Growl bridge would be separate.

Having all that, here was the pipeline I envisioned:

- A notification email would come into Gmail and get filtered

- It would forward to an address that points to Protocol Droid

- Protocol Droid would post the email to a script on Scriptlets

- The script would use Protocol Droid again to send an XMPP message

- The message would be sent to a JID for my laptop if online

- The XMPP to Growl bridge on my laptop would receive and notify me

Building the Infrastructure

I decided to build all this last Friday night. I got caffeinated and went to work, starting with Protocol Droid. I hadn’t touched it since I was trying to build an HTTP to SMTP module for it. It wasn’t part of this project, but I decided to finish it off. You know, to get warmed up and have an early win.

That happened pretty quickly, so I was excited get started on the next task: an email to webhook bridge. This was a little different. So far everything in Protocol Droid was for outgoing connections. This would be the first listening module. The idea was that it would be an SMTP server that would parse incoming email and post it to a callback URL registered for a recipient domain. I’d written several of these before for mailhook.org, so I ported the code to Twisted for Protocol Droid. Another pretty easy win. I used PostBin to debug this, obviously.

At that point, I decided to take a break and watch an episode of Pushing Daisies. I still haven’t seen all of the second season!

Then came the hard part. Well, I thought it would be easy. The HTTP to XMPP bridge. It turns out the XMPP support in Twisted isn’t very well documented and slightly underdeveloped. I had to build a couple of prototypes to get the hang of it. Even then, building this module and the XMPP to Growl daemon took about 7 hours! Compared to the 3 hours spent doing the last two modules. XMPP is definitely a bit hard to really get into.

By 8am I had all the pieces working. I thought about going the last mile and getting it all online and set up the pipeline … but I decided I deserved some sleep. I’d do it when I wake up.

Getting the Pipeline in Place

When I woke up on Saturday, I was still burnt out from coding. I spent the day hanging out with friends. Then later I invited some more friends over for hacking at my place. There I spent more time than I wanted getting my code to run on my server. Apparently OpenSSL for Python wasn’t installed and the Google Talk servers required TLS. It took a while to figure this out since no error was raised and Google would just drop my connection.

I finally got all the infrastructure I built online and tested. Finally, I could work on the actual pipeline. It was supposed to be easy from that point on. And it was! Except for one bit …

I got almost all the pieces connected and it all came down to the glue code on Scriptlets. The script would parse the email however I like and set up the message that would end up on my laptop via Growl. It turns out Scriptlets is a bit of a pain to use. No edit, no debugging, crappy error messages. Since I built it as a proof of concept, I hadn’t done much development on it, let alone with it. Anyway, I learned a lot and have some things to fix. But! I was still able to get everything working!

I had my friend Adam send the first test email that would go through the entire pipeline I outlined above. He sent it from his phone, just to add a little to the magic. Low and behold, it worked perfectly, quite quickly. It took 4 seconds from him sending to the notice appearing … but about 3 of those seconds were the time it took for the phone to send the email. Nevertheless, brilliant!

Next Steps

I’ve since found out about Wokkel, which might simplify some of my XMPP code. I have some other enhancements to make to each piece to make them more general. And I mentioned some of the things I need to add to Scriptlets.

Functionally, the pipeline is pretty good. The only addition I can think of would require an HTTP to IMAP bridge in Protocol Droid (something I’ve already prototyped). I’d use this to mark the notification email as unread if it wasn’t able to deliver the IM. But for now, I think I’m done on this project. And you can all take advantage of the infrastructure I built out for rigging up your own cool hacks.

Most of the code this weekend went into these two projects, so check them out!

Reminders with Quicksilver and Growl

June 13, 2009

For my todo system, I’ve been using Quicksilver with Remember the Milk for a while. It’s great for being able to throw things into my todo list at anytime (as long as I’m at my laptop). Especially with its natural language date parsing: “buy some cheerios tomorrow” or “see brothers bloom tuesday.” However, it’s not a very good reminder system.

Occasionally I’d use it with a specific time (“dentist appointment tomorrow 9am”), but for the most part, Remember the Milk todos are not about micro time management within the day. Especially since they don’t have a good notification method. So for reminders, I had to build my own system. Here’s what I wanted:

- Simple text scheduling from Quicksilver

- Growl for the reminder

- Natural scheduling (“go home at 7pm”, “eat lunch in 5m”)

Since most Unix systems (including OS X) have the `at` command for scheduling commands with a decent syntax, and Growl has the `growlnotify` command, I decided to start at the command line with a shell script.

#!/bin/bash

growlnotify=/usr/local/bin/growlnotify

normal=$(echo $1 | sed -e 's/ in / at /')

what=${normal%% at*}

when=${normal##*at }

when=$(echo $when | sed -e 's/^\([0-9]*\)m$/now + \1 minutes/')

when=$(echo $when | sed -e 's/^\([0-9]*\)h$/now + \1 hours/')

result=$(echo "$growlnotify -s -m '$what' > /dev/null 2>&1" | at $when 2>&1)

if [ $? -eq 0 ]; then

result=${result##*at }

result="Scheduled for $result"

fi

$growlnotify -m "$result"

This script takes a single argument string in the format I described above, splitting $what and $when with either “at” or “in”. Then I expand relative time (“10m” or “2h”) into the format `at` wants (“now + 10 minutes” — which I didn’t want to have to type). It schedules the command to use `growlnotify` with $what (as a sticky notification, so it won’t go away until I click it), and then immediately tells you when it was scheduled for if successful, or it tries to show you the error if not.

So that works fine. Now Quicksilver. You can write new actions in AppleScript, but I didn’t want to try and figure out how to write the above in AppleScript. It was hard enough figuring it out in bash. So the AppleScript action is just going to call out to that shell script.

using terms from application "Quicksilver" on process text str do shell script "/Users/Jeff/.scripts/growlat.sh \"" & str & "\" > /dev/null 2>&1" set selection of application "Quicksilver" to str end process text end using terms from

Apparently AppleScript files are not plain text, so you have to write it using Script Editor. Make sure the path to growlat.sh points to the shell script above. Then save this as Remind.scpt in your user’s “~/Library/Application Support/Quicksilver/Actions” directory. If it doesn’t exist, just make the directory. Restart Quicksilver and you should now have a Remind action! It’ll look like this: